Your AI model needs human judgment to learn what “good” really looks like

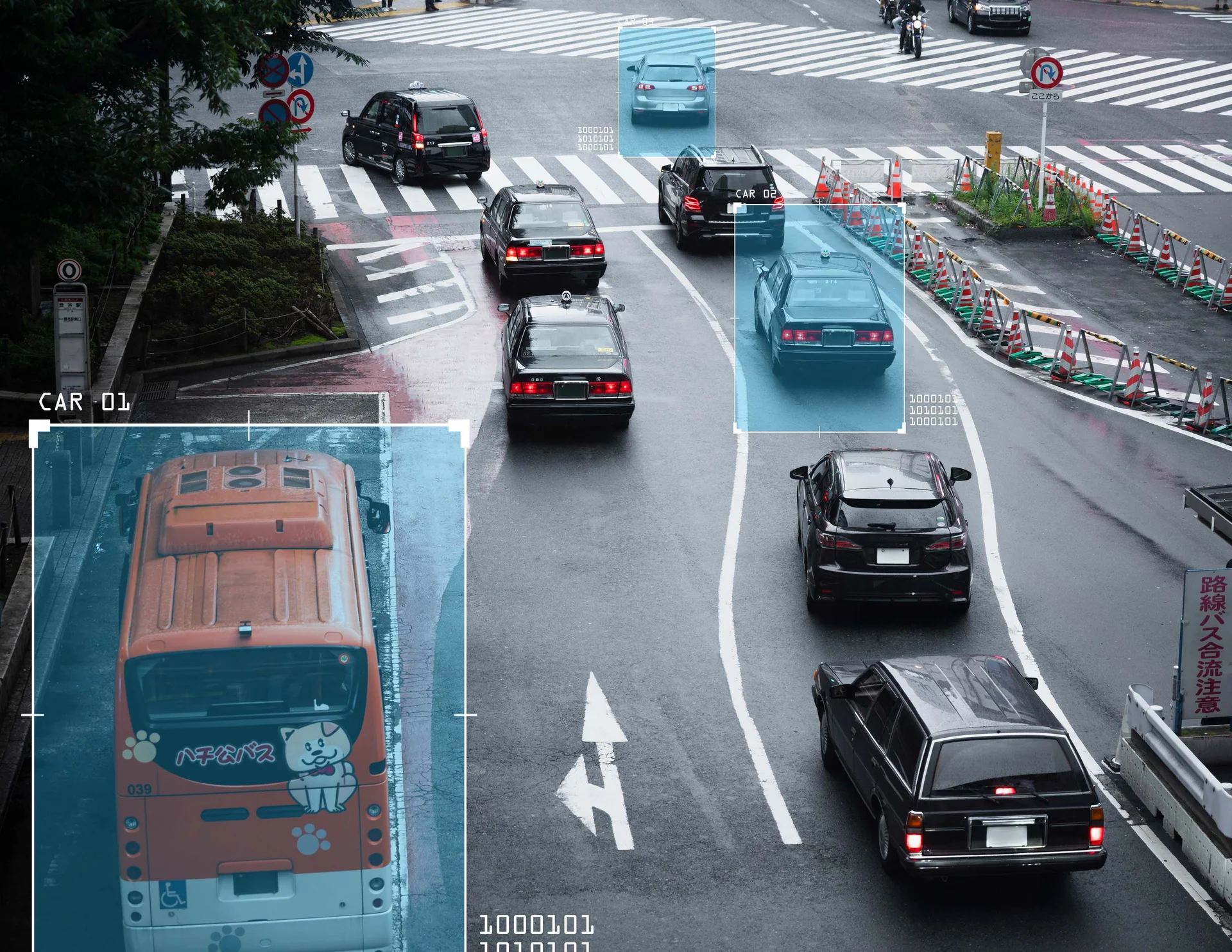

LLMs and AI systems can generate outputs at scale, but without structured human feedback, they drift. Responses become inconsistent. Hallucinations increase. Edge cases slip through. Models technically work, but fail in real-world usage.

Building in-house RLHF teams is expensive and slow. You need domain-skilled reviewers, consistent guidelines, multi-layer quality control, and the ability to scale feedback loops quickly. Without it, model performance plateaus and deployment timelines stall.

The result? AI models that look promising in testing but underperform in production. Re-training cycles stretch endlessly. Trust in outputs erodes.

The Fives Digital Solution

Fives Digital operationalizes RLHF at scale. We deliver structured, high-quality human feedback pipelines that align AI models with real-world expectations, safety requirements, and business goals—while reducing cost and time-to-train.

With 16+ years managing large-scale data operations and a workforce of 3,500+ trained professionals across 9 locations, we support RLHF programs delivering millions of preference rankings, comparisons, and evaluations annually. Pilot RLHF programs launch in 1–2 weeks with measurable accuracy and consistency before scaling to production volumes.