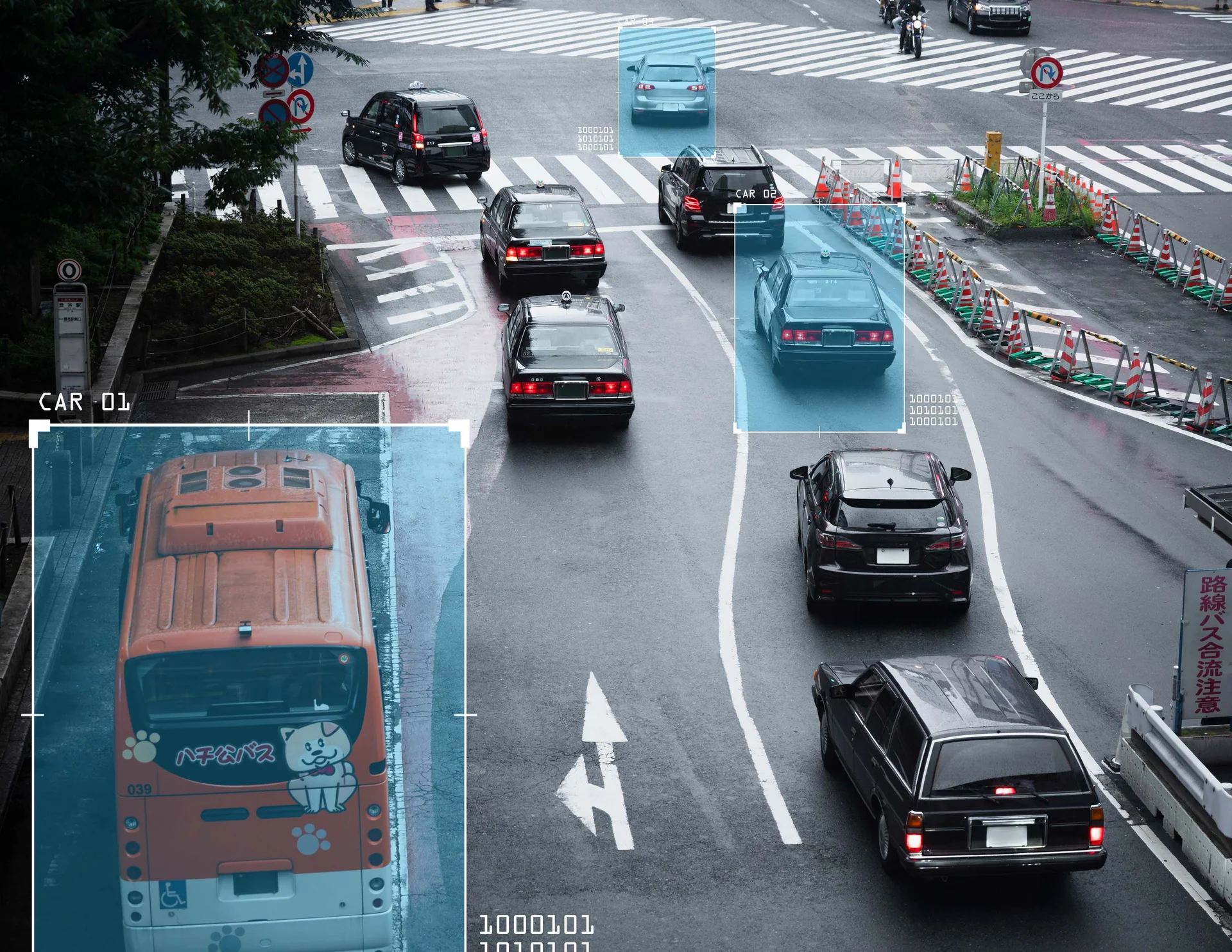

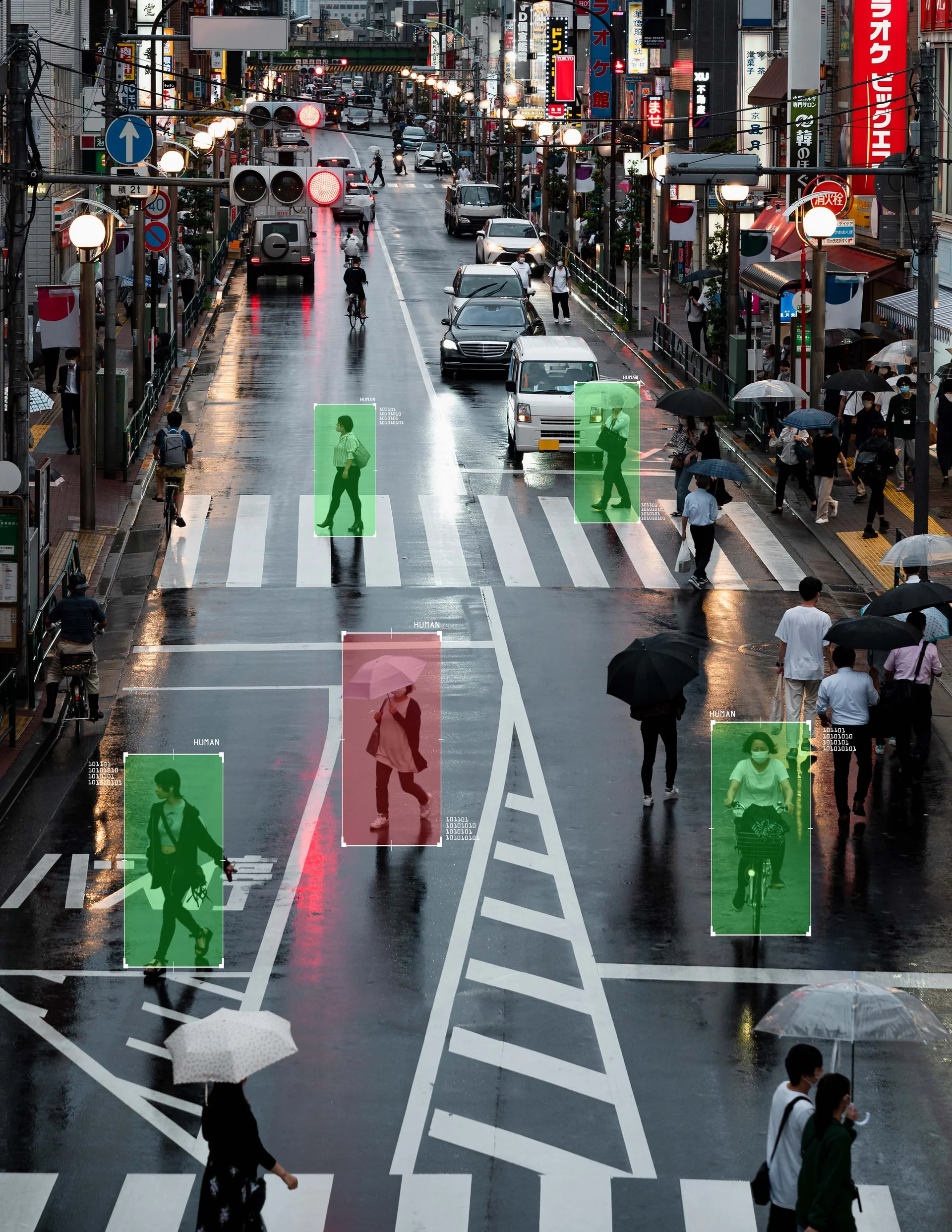

Autonomous vehicle development requires accurately labeled training data from cameras, LiDAR, radar, and other sensors to detect vehicles, pedestrians, cyclists, traffic signs, and road conditions. But annotation quality directly impacts perception system performance—mislabeled objects lead to detection failures, and inconsistent annotations create tracking problems.

Autonomous vehicle data annotation requires understanding your specific sensor configurations, following detailed 2D and 3D labeling guidelines consistently, and maintaining quality across large datasets with millions of frames and complex temporal tracking requirements.

FiveS Digital provides scalable autonomous vehicle data annotation services—training annotators on your specific requirements and delivering consistent labeling quality across large sensor datasets.

With 16 years managing data operations and 50 million+ annotations delivered annually, we provide trained annotation teams working from detailed guidelines you provide. Our annotators are trained specifically on automotive perception annotation—learning to label 2D camera images, annotate 3D LiDAR point clouds, track objects across frames, and maintain consistency based on your reference materials and expert feedback.

We handle camera imagery, LiDAR point clouds, sensor fusion datasets, and multimodal annotations—following your autonomous vehicle taxonomies and specifications. Deploy pilot projects in 2-3 weeks with quality validation processes and 40-60% cost savings versus building in-house annotation teams. Scale from 20 to 200+ trained annotators based on your data volume and timeline.

Autonomous Vehicle Annotation Services We Provide

2D Camera Annotation: Bounding boxes for vehicles/pedestrians/cyclists/signs, semantic segmentation for drivable areas, polygon annotation for irregular objects, traffic light and sign recognition.

3D LiDAR Annotation: 3D bounding cuboids with position/dimension/rotation, point cloud segmentation, object tracking across frames, occlusion and truncation handling.

Sensor Fusion: Camera-LiDAR alignment, synchronized multimodal annotation, cross-sensor consistency verification, temporal tracking across all sensor streams.

Traffic Infrastructure: Lane markings, road boundaries, crosswalks, traffic signs, traffic lights, parking spaces, construction zones.

Object Tracking: Frame-by-frame object identification, unique ID maintenance, temporal consistency verification, trajectory annotation.

Scenario Annotation: Weather conditions, lighting variations, urban/highway/parking scenarios, edge cases and rare events.

Proven Delivery for Autonomous Vehicle Projects

50 Million+ Total Annotations: Delivered annually across all industries with proven large-scale data operations expertise.

Automotive-Scale Capacity: Process hundreds of thousands of frames monthly with teams of 20 to 200+ trained annotators deployed within 2-3 weeks.

Quality Processes: Multi-tier validation including peer review, supervisor checks, automated consistency verification, geometric validation, and temporal tracking audits.

Fast Turnaround: 2-3 week pilot projects, production operations within 3-4 weeks, daily or weekly batch deliveries for ongoing data streams.

Cost Efficiency: 40-60% savings versus building in-house automotive annotation teams with 3D annotation infrastructure and training overhead.

Flexible Scaling: Adapt team size to data collection volumes, testing phases, and development timelines—scaling up during intensive periods, down during slower phases.